The cloud does not cancel out physics. Services still depend on specific data centers' redundancy, power, network paths, and people. Events in 2025–2026 reminded the market that cloud infrastructure resilience is still physical.

In March 2026, the Associated Press reported that drone strikes damaged several Amazon Web Services facilities in the UAE and Bahrain.

The problem isn’t limited to wars or physical attacks. Even without an external crisis, a complex internal failure is enough.

In its official post-event summary, AWS described an incident in us-east-1 on October 19–20, 2025, when a defect in DNS automation for DynamoDB caused endpoint resolution failures, elevated API errors, and a cascade of downstream effects for EC2 launches and other services.

Google Cloud also reported elevated latency and errors in us-east-1 in July 2025 for nearly two hours.

That’s usually the point where a regional outage stops looking like a rare event and starts exposing a deeper problem: too much of your infrastructure still depends on a single location.

Why a Single Region Stops Looking Like a Safe Bet

Even if your application can survive the loss of one zone, it may not survive a broader regional issue. The AWS us-east-1 incident in 2025 is a clear example of how regional dependencies and service cascades can disrupt operations far beyond one component.

In ad and partner operations, latency hurts just as much as downtime. Research highlighted by Google found that as mobile page load time increases, bounce probability rises sharply.

The next question is: what breaks first when that dependency becomes visible in day-to-day operations?

What Breaks First When Single-Region Hosting Has Problems

When your only region becomes unstable, you start rerouting access, shifting traffic, and improvising around the outage.

If partner APIs, payment processors, affiliate platforms, or bank-side systems expect traffic from allowlisted addresses, a regional incident quickly turns into an access-control problem. Without a second location and endpoint already prepared, failover becomes a lockout.

A single-region setup forces more of your traffic to travel farther, just as you’re trying to stabilize operations. A CDN can speed up static assets, but it doesn’t fix dynamic round-trip times for tracking, postbacks, anti-fraud checks, partner APIs, or app requests. If your home server is far from both the end user and the partner, your ROAS starts leaking through delays and retries.

Isolation also starts to collapse fast. When one region has problems, you don’t want unrelated accounts, client environments, or market-specific setups converging onto the same emergency path, creating shared signals, shared risk, and more review friction.

Finally, your rollout process becomes the bottleneck. When you need to bring up the same stack in 10 or 20 markets, single-region hosting starts to look manual. You need a repeatable way to launch another regional node or isolated environment quickly — and you need it without discovering hidden taxes like blocked SMTP on port 25, only after you’re already in recovery mode.

What You Reconsider After a Cloud or Single-Region Incident

The first thing you reconsider after a regional incident is concentration risk. If critical services, partner-facing endpoints, and automation pipelines all sit in one cloud region, a regional incident can become a company-wide incident. That pushes teams toward splitting workloads across multiple locations, even if only part of the stack is duplicated.

The second is server disaster recovery. Backups alone are not server disaster recovery. They store data but don’t give you a live recovery path with defined RTO and RPO.

The third is whether all workloads really belong in one cloud provider. After a region-specific incident, you may start looking at virtual private or dedicated servers as a second infrastructure layer, or a multi-regional server setup. That layer can be used for standby environments, backup sites, region-specific nodes, fixed-IP integrations, or workloads that need cleaner isolation and more predictable network behavior.

Why You Start Looking Beyond a Single Cloud Footprint

Not every workload has to leave the cloud, and the benefits of a multi-cloud strategy are still valid. The point is to stop concentrating all critical functions in one place. Once that becomes clear, multi-region deployment, a real DR site, and selected workloads on VPS or dedicated servers start to look like the practical next step.

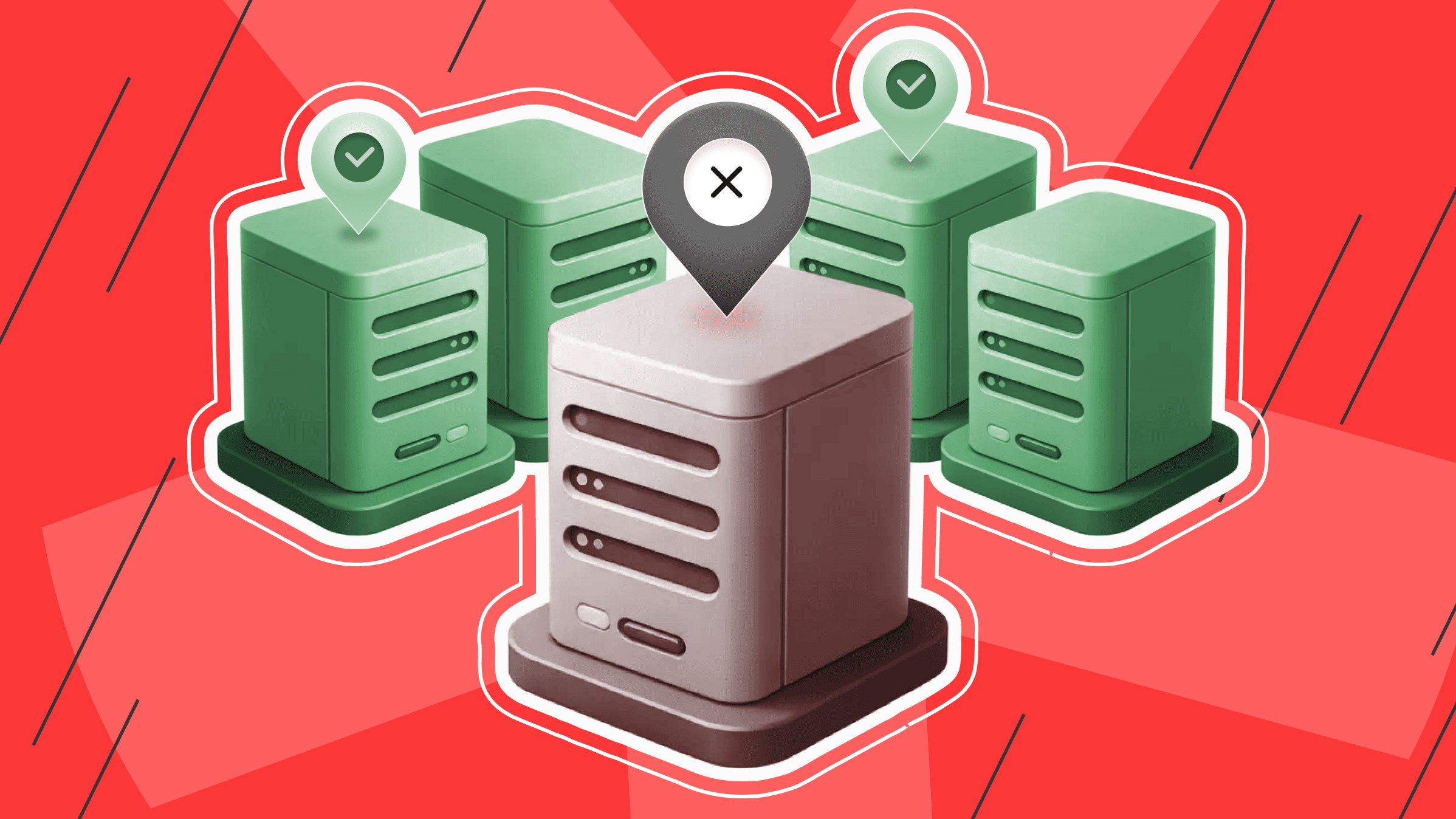

How Distributed Server Infrastructure Improves Server High Availability

Multi-region infrastructure improves availability by letting you separate functions by geography rather than forcing everything into a single failure domain.

You can keep elastic workloads in the cloud while using geo-distributed servers for failover capacity, backup environments, static-IP services, and regional nodes closer to target markets.

That’s why regional outages often change not only recovery plans, but also server failover architecture decisions.

When you have multi-region infrastructure, you’re not trying to recover everything within the same failure domain.

At is*hosting, our VPS and dedicated servers across 40+ countries can help you add a second layer for failover, DR, and regional deployment.

Choose VPS in 40+ locations

Get dedicated resources and KVM isolation for experiments worldwide.

Worldwide Infra

is*hosting works with the best data centers worldwide and HI-END class equipment.

See Coverage