Artificial intelligence and machine learning are multimillion-dollar technologies that have the potential to improve the lives of society and should be a successful development that can be applied to any field. But is this really the case?

Process automation based on artificial intelligence has indeed found its way into many areas, including manufacturing. It has reduced costs and generally increased efficiency. The rapid development of machine learning has led to the opening up of such technologies to the public, widening the range of possible applications.

There are not only small domestic developments in every country, but also international companies that have given people the tools to generate absolutely any media content.

What seems to be wrong with the development of AI? However, when you look deeper into the issue, there are real ethical problems. And today, these problems are being openly expressed by a number of well-known individuals who are calling for the development of machine learning technology to be paused in order to "save" society.

What is the use of artificial intelligence?

Artificial intelligence and machine learning are technologies that are already having a significant impact on various industries. From healthcare to agriculture, AI and ML are literally making a revolution in the way we work and live. Why do people use AI?

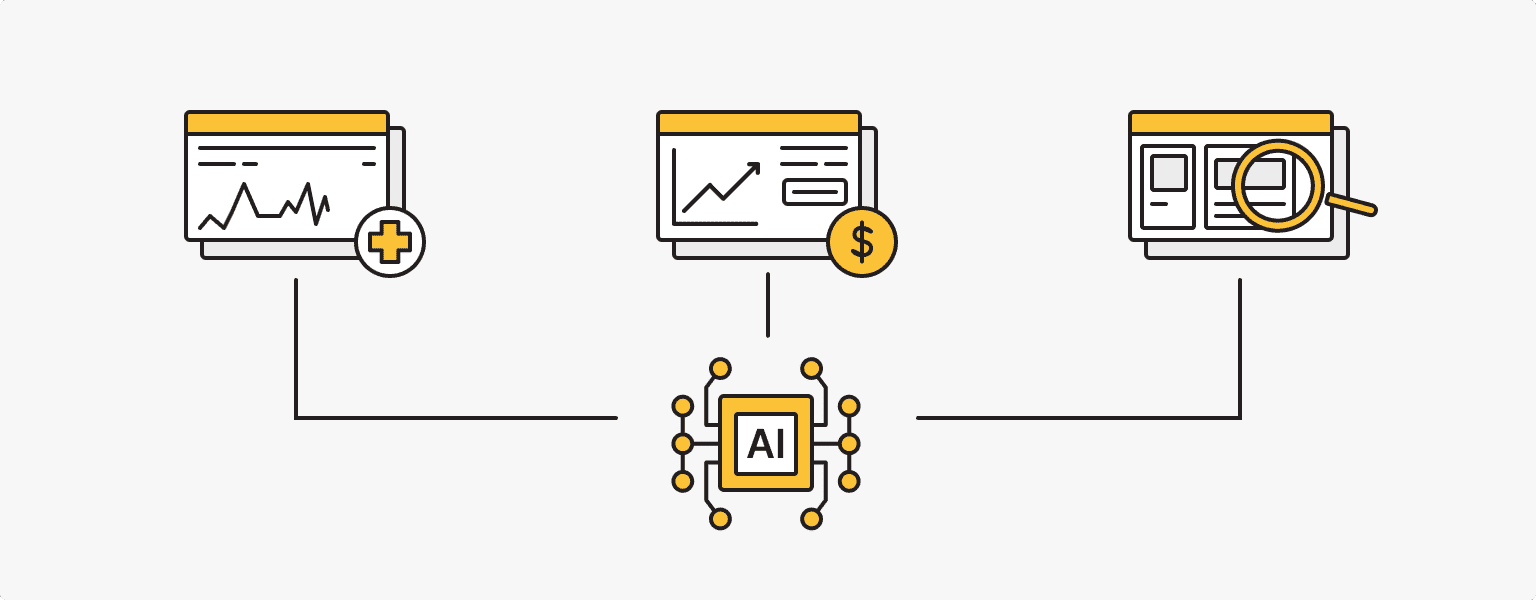

By analyzing vast amounts of medical data, artificial intelligence can identify patterns and predict outcomes, allowing healthcare professionals to develop tailored treatment plans that are more effective than ever before. In this case, doctors can back up their findings with the results of specific AI-powered tools.

In finance, AI is being used to analyze all types of financial data, including stock quotes, detect fraudulent activity and make informed investment decisions. Businesses and individuals can make more accurate financial decisions, saving time and money.

Marketers, designers and influencers use artificial intelligence to create and analyze the results of personalized marketing campaigns, and original media content, identify and analyze target audiences and optimize various strategies. The result is increased audience engagement and improved performance.

AI is helping logistics by optimizing traffic flows, improving navigation systems and developing autonomous vehicles. By using this technology to analyze and make appropriate decisions, cities can reduce congestion and improve overall traffic flow. And the development of autonomous and semi-autonomous vehicles will and is already making roads safer.

The introduction of machine learning has significantly improved many production lines in terms of detecting and preventing equipment failures and improving product quality.

And the applications of AI and ML don't stop there - they are being used almost everywhere, automating and improving many processes in ways that humans can’t.

Problems with artificial intelligence

Despite the clear benefits of artificial intelligence, we can increasingly see gaps in the legal and ethical issues in artificial intelligence. Ethical concerns with ai systems are:

Bias of AI

Everyone knows that artificial intelligence learns from the information that the owner or the average user "feeds" it. The AI is only as impartial as the data is objective. This leads to a violation of basic ethical questions about morality, law, etc. Just ask the AI to imagine that there is no law protecting personal data - what will stop it from revealing personal information?

The problem of bias can also be expressed in the algorithms of the AI itself, where answers to questions are given on the basis of a single study that it has been trained on. In addition, AI can generate untrue information, which can then lead to propaganda and flood any media space with false data.

Who is responsible for the AI results?

Generative AIs such as ChatGPT, DALLE-2, Stable Diffusion and Midjourney use incredible amounts of data to do their work, but can still produce incorrect or unethical results. In this case, there is no one to take responsibility for what the AI has generated.

When it comes to the data used to train AI, there is also the issue of security. Who will protect ordinary users from data leakage?

The Office of the Privacy Commissioner of Canada has announced the opening of an investigation into the developers of OpenAI's ChatGPT neural network. The process was initiated in response to a complaint about the collection, use and disclosure of personal information without users' consent.

The same thing happened in Italy. The Italian government decided to temporarily block access to ChatGPT in the country to investigate OpenAI's violations of the law. The authorities believe it is illegal for AI to learn from personal information.

It has been suggested that OpenAI may violate European Union privacy laws. However, the EU has been discussing and amending the Artificial Intelligence Law, which is supposed to regulate these issues, for months.

However, key legislation has yet to be passed and may be delayed until next year.

Security issues

In addition to the use and leakage of user data from AI applications, the danger that this technology poses to other services should not be underestimated.

Generative AI can be used to create fake data that can potentially bypass cloud security systems. The results of generation can launch attacks on a system, manage captured data, and generally cause damage.

It is worth noting that companies attacked by AI hackers will need to take steps to improve their security. The most effective solution for detecting such attacks would be another machine-learning tool.

There is no international legal regulation for this vicious circle.

Disappearance of professions

An acute moral issues with artificial intelligence that has existed for several hundred years has been accelerated many times over by the arrival of AI.

International consulting firms McKinsey and Pricewaterhouse Coopers predict that artificial intelligence will replace about a third of today's jobs within 10 years. The US and Japan will be hit the hardest.

Translators, drivers, couriers, bankers, travel agents, salespeople, copywriters, some programmers, and many others will soon be without a trade.

But is it necessary to automate work that is a source of pleasure to hundreds of people?

Examples of unethical use of AI

In the hands of unscrupulous individuals, AI tools can become a kind of weapon. Unethical examples of the use of artificial intelligence include:

- Companies may use AI to monitor and spy on people without their knowledge or consent, violating their right to privacy. This includes the question of the use of information provided by users to the AI application.

- AI systems can reinforce existing societal prejudices that hinder the elimination of discrimination and worsen relations within society.

- AI can be a tool to create complex phishing scams and various forgeries that can be used to deceive and defraud.

- AI capabilities can be used to create targeted disinformation campaigns and manipulate public opinion.

- The development of autonomous tools capable of making life-and-death decisions without human intervention raises ethical concerns about the accountability of these systems.

Who is against artificial intelligence: real-life examples

“Should we let machines flood our information channels with propaganda and untruth? Should we automate away all the jobs, including the fulfilling ones? Should we develop nonhuman minds that might eventually outnumber, outsmart, obsolete and replace us? Should we risk loss of control of our civilization?”

The authors of the open letter call for reflection on these questions, which directly and indirectly affect the ongoing use of artificial intelligence and machine learning.

The letter, published on 22 March 2023, calls on all AI labs to immediately stop training AI systems that are more powerful than GPT-4 for at least 6 months.

It is well known that AI systems have the potential to have a significant impact on humanity, which requires an orderly use of resources and development of technology, generally established international legal regulation and the definition of safety standards. The letter points out that these criteria are not currently being met, which could undermine existing ethical standards and lead to significant problems.

Why is a pause of 6 months necessary?

“AI labs and independent experts should use this pause to jointly develop and implement a set of shared safety protocols for advanced AI design and development that are rigorously audited and overseen by independent outside experts.

AI research and development should be refocused on making today's powerful, state-of-the-art systems more accurate, safe, interpretable, transparent, robust, aligned, trustworthy, and loyal”.

Ilon Musk (CEO, SpaceX, Tesla and Twitter), Steve Wozniak (co-founder, Apple), Connor Leahy (CEO, Conjecture), Evan Sharp (co-founder, Pinterest), Chris Larsen (co-founder, Ripple), Emad Mostak (CEO, Stability AI) and over 18,000 other people signed the open letter.

It is worth noting that the letter was followed by a response from Bill Gates. Microsoft currently dominates the AI sector thanks to its massive multi-year, multi-billion dollar investment in OpenAI, an AI research company.

Gates said that there are some challenging areas in AI, but asking a select group of people to stop development will not solve the problems.

The AI controversy: for or against?

The benefits of artificial intelligence and machine learning are undeniable, and its use has truly improved many industries and taken businesses, including information technology, to the next level. Many services are using artificial intelligence to improve the user experience and have made significant advances in security.

However, questions remain about the legal and ethical regulation of the development and evolution of AI tools. Addressing these complexities has the potential to take society and the benefits it derives from AI to the next level, but it still requires coherence from both governments and private companies.

Personal VPN

Stay anonymous online with a dedicated IP and don't endanger your personal data.

Get $5.00/mo